Hosts and Clusters

Overview

In HyperCX virtual workloads are deployed inside hosts and each host is part of a cluster. When HyperCX detects that a host is down, VMs and containers that were running on that host will be automatically migrated to a healthy node. This can be very useful to limit the downtime of a service due to a hardware failure, since it can redeploy the VMs on another host. Note that this process will take around 6 minutes, it is important to consider this for critical applications.

A Hypervisor is a specialized piece of system software that manages and runs virtual machines. Virtalus HyperCX supports two hypervisors running directly on the server (bare metal):

-

KVM (Kernel-based Virtual Machine) is a full virtualization solution for Linux on x86 hardware containing virtualization extensions (Intel VT or AMD-V).

-

LXD is a next generation system container manager. It offers a user experience similar to virtual machines but using Linux containers instead.

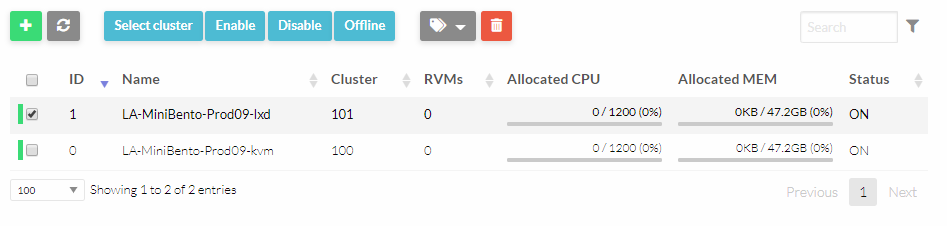

Hosts

On HyperCX Web Portal, each host represents a hypervisor inside a physical host. This means that, a HyperCX Bento infrastructure with four physical servers and support for KVM and LXD hypervisors will show 8 hosts in the orchestrator.

Note

Containers can not be considered as virtual instances because they do not comply with the Popek and Goldberg virtualization requirements. For this same reason, LXD can not technically be considered a hypervisor. For simplicity, in this document we will refer to LXD as a hypervisor.

Showing and Listing Hosts

To display information about a single host the navigate to Infrastructure -> Hosts and then click on the desired host.

The information of a host contains:

-

General information of the hosts including its name and the drivers used to interact with it.

-

Capacity information (Host Shares) for CPU and memory.

-

Local datastore information (Local System Datastore) if the Host is configured to use a local datastore (e.g. Filesystem in ssh transfer mode).

-

Monitoring Information, including PCI devices

-

Virtual Machines actives on the hosts. Wild are virtual machines active on the host but not started by HyperCX, they can be imported into HyperCX.

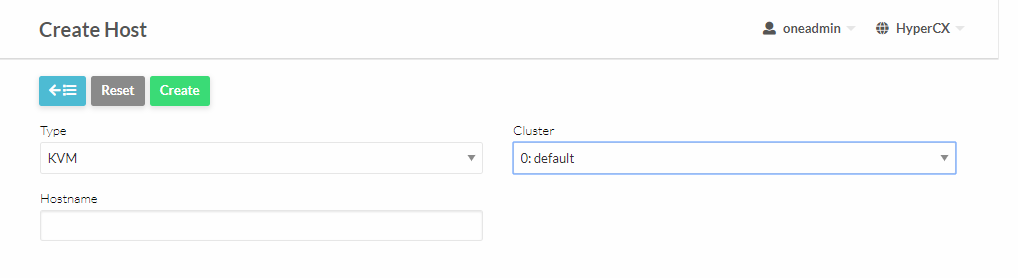

Create and Delete Hosts

To use hosts in HyperCX you need to register them so they are monitored and made available to the scheduler.

- To create a Host navigate to Infrastructure -> Hosts and then click on

.

.

- To Delete a Host select the desired host and then click on

.

.

Note

Adding or removing hosts might require intervention from Virtalus unless a server is provided directly by Virtalus.

Importing Wild VMs

The monitoring mechanism in HyperCX reports all VMs found in a hypervisor, even those not launched through HyperCX. These VMs are referred to as Wild VMs, and can be imported to be managed through HyperCX. This includes all supported hypervisors.

-

The Wild VMs can be spotted through the Wilds tab after selecting the host.

-

Now select the wild VMs that you want to import.

After a Virtual Machine is imported, their life-cycle (including creation of snapshots) can be controlled through HyperCX. However, some operations cannot be performed on an imported VM, including: poweroff, undeploy, migrate or delete-recreate.

Host Life-cycle: Enable, Disable, Offline and Flush

In order to manage the life cycle of a host it can be set to different operation modes: enabled (on), disabled (dsbl) and offline (off). The different operation status for each mode is described by the following table:

| OP. MODE | MONITORING | MANUAL VM DEPLOYMENT | SCHED VM DEPLOYMENT | MEANING |

|---|---|---|---|---|

| ENABLED (on) | Yes | Yes | Yes | The host is fully operational |

| UPDATE (update) | Yes | Yes | Yes | The host is being monitored |

| DISABLED (dsbl) | Yes | Yes | No | Disabled, e.g. to perform maintenance operations |

| OFFLINE (off) | No | No | No | Host is totally offline |

| ERROR (err) | Yes | Yes | No | Error while monitoring the host, use onehost show for the error description |

| RETRY (retry) | Yes | Yes | No | Monitoring a host in error state |

Host Information

Hosts include the following monitoring information.

| Key | Description |

|---|---|

| Hypervisor | Name of the hypervisor of the host, useful for selecting the hosts with an specific technology |

| ARCH | Architecture of the host CPU’s, e.g. x86_64 |

| Model Name | Model name of the host CPU, e.g. Intel(R) Core(TM) i7-2620M CPU @ 2.70GHz |

| CPUS-Speed | Speed in MHz of the CPU’s |

| Host-Name | As returned by the hostname command |

| Version | This is the version of the monitoring probes. Used to control local changes and the update process |

| MAX_CPU | Number of CPU’s multiplied by 100. For example, a 16 cores machine will have a value of 1600.,The value of RESERVED_CPU will be subtracted from the information reported by the monitoring system.,This value is displayed as TOTAL CPU by the onehost show command under HOST SHARE section |

| MAX_Memory | Maximum memory that could be used for VMs. It is advised to take out the memory used by the hypervisor using RESERVED_MEM. This value is subtracted from the memory amount reported.,This value is displayed as TOTAL MEM by the onehost show command under HOST SHARE section |

| MAX_Disk | Total space in megabytes in the DATASTORE LOCATION |

| USED_CPU | Percentage of used CPU multiplied by the number of cores. This value is displayed as USED CPU (REAL) by the onehost show command under HOST SHARE section |

| USED_Memory | Memory used, in kilobytes. This value is displayed as USED MEM (REAL) by the onehost show command under HOST SHARE section |

| USED_Disk | Used space in megabytes in the DATASTORE LOCATION |

| FREE_CPU | Percentage of idling CPU multiplied by the number of cores. For example, if 50% of the CPU is idling in a 4 core machine the value will be 200 |

| FREE_Memory | Available memory for VMs at that moment, in kilobytes |

| FREE_Disk | Free space in megabytes in the DATASTORE LOCATION |

| CPU_Usage | Total CPU allocated to VMs running on the host as requested in CPU in each VM template. This value is displayed as USED CPU (ALLOCATED) by the onehost show command under HOST SHARE section |

| Memory_Usage | Total MEM allocated to VMs running on the host as requested in MEMORY in each VM template. This value is displayed as USED MEM (ALLOCATED) by the onehost show command under HOST SHARE section |

| Disk_Usage | Total size allocated to disk images of VMs running on the host computed using the SIZE attribute of each image and considering the datastore characteristics |

| NETRX | Received bytes from the network |

| NETTX | Transferred bytes to the network |

| WILD | Comma separated list of VMs running in the host that were not launched and are not currently controlled by HyperCX |

| ZOMBIES | Comma separated list of VMs running in the host that were launched by HyperCX but are not currently controlled by it |

Cluster

A Cluster is a group of Hosts. Clusters can have associated Datastores and Virtual Networks, this is how the administrator sets which Hosts have the underlying requirements for each Datastore and Virtual Network configured.

On HyperCX Bento, one cluster will exist for every supported hypervisor. For example, a HyperCX Bento infrastructure with support for KVM and LXD will have 2 clusters preconfigured called KVM and LXD. Each cluster will have associated the hosts with the right hypervisor, an optimized System Datastore for this workload and all the Virtual Networks available.

Each template specifies on which cluster it will run. This is how the system knows if a template belongs to a KVM VM or a LXD container. Notice that support for LXD is optional, for clusters now using LXD containers, if a LXD template is deployed, the Virtual instance will stay indefinitely in PENDING state since the scheduler will not find the LXD cluster.

Note

For clusters with LXD support enabled, half of the resources of the physical server will be assigned to the LXD hypervisor instance of the server, while the other half will be assigned to the KVM instance. This ratio can be changed any time but the total amount of resources of the LXD and the KVM instance from any specific host can not exceed the existing resources of that server. If done so, this server will be overprovisioned and can fail if resources are fully stagnated. Virtalus does not guarantees overprovisioned hosts or clusters.

CPU Topology

In HyperCX the virtual topology of a VM is defined by the number of sockets, cores and threads. The following concepts must be understood:

- Cores, Threads and Sockets. A computer processor is connected to the motherboard through a socket. A processor can pack one or more cores, each one implements a separated processing unit that share some cache levels, memory and I/O ports. CPU Cores performance can be improved by the use of hardware multi-threading (SMT) that permits multiple execution flows to run simultaneously on a single core.

Defining a Virtual Topology

Basic Configuration

The most basic configuration is to define just the number of vCPU (virtual CPU) and the amount of memory of the VM. In this case the guest OS will see VCPU sockets of 1 core and 1 thread each. The VM template in this case for 4 vCPUs VM is:

MEMORY = 1024

VCPU = 4

A VM running with this configuration will see the following topology:

# lscpu

...

CPU(s): 4

On-line CPU(s) list: 0-3

Thread(s) per core: 1

Core(s) per socket: 1

Socket(s): 4

NUMA node(s): 1

CPU Topology

You can give more detail to the previous scenario by defining a custom number of sockets, cores and threads for a given number of vCPUs. Usually, there is no significant difference between how you arrange the number of cores and sockets performance-wise. However some software products may require a specific topology setup in order to work.

For example a VM with 2 sockets and 2 cores per sockets and 2 threads per core is defined by the following template:

VCPU = 8

MEMORY = 1024

TOPOLOGY = [ SOCKETS = 2, CORES = 2, THREADS = 2 ]

and the associated guest OS view:

# lscpu

...

CPU(s): 8

On-line CPU(s) list: 0-7

Thread(s) per core: 2

Core(s) per socket: 2

Socket(s): 2

NUMA node(s): 1

Important

When defining a custom CPU Topology you need to set the number of sockets, cores and threads, and it should match the total number of vCPUS, i.e. VCPU = SOCKETS X CORES X THREAD

The topology can be customized in two places.

- On the VM Template. This will allow all the VM generated from it to have the specified topology. You can find related information here.

- On the existing VM. This allows modifying only one specific vm. More related information can be found here.

Summary of Virtual Topology Attributes

| TOPOLOGY attribute | Meaning |

|---|---|

| PIN_POLICY | vCPU pinning preference: CORE, THREAD, SHARED and NONE |

| SOCKETS | Number of sockets or NUMA nodes |

| CORES | Number of cores per node |

| THREADS | Number of threads per core |

FAQ

Q: 6 Hosts showing up for a cluster of 3 physical nodes. A: HyperCX offers LXD as well as KVM on the same host that's why user might see 2 hosts for one physical node that's why it makes up 6 hosts for 3 physical nodes.